Frequently Asked Questions

What was the first machine translation system?

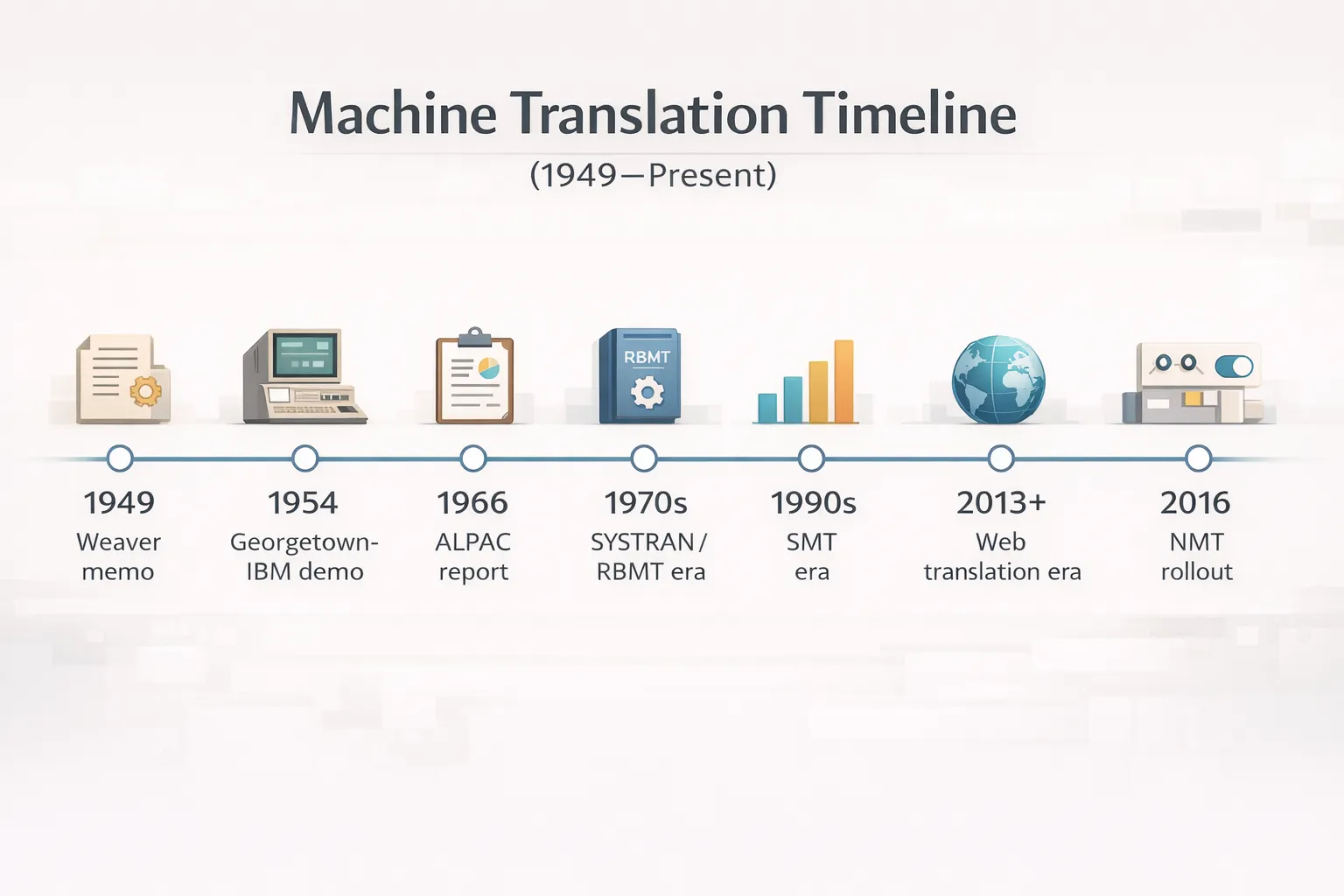

The first publicly demonstrated machine translation system was the Georgetown-IBM Experiment (January 1954), which translated 60 Russian sentences into English using 6 grammatical rules and a 250-word vocabulary, developed by Leon Dostert (Georgetown) and IBM.

Who invented machine translation?

Warren Weaver, Vice President of Natural Sciences at the Rockefeller Foundation, authored the foundational 1949 memorandum that proposed applying wartime cryptographic methods to language translation, establishing machine translation as a research field.

When did neural machine translation start?

Neural machine translation began with the seq2seq (sequence-to-sequence) encoder-decoder architecture introduced by Sutskever et al. at Google Brain in 2014, with Google launching its production GNMT system in September 2016.

What is the Transformer in machine translation?

The Transformer is a neural network architecture introduced in the 2017 paper ‘Attention Is All You Need’ (Vaswani et al., Google Brain), which replaced recurrent neural networks in MT systems using a self-attention mechanism — the foundation of all modern MT tools.

What was the ALPAC Report and what did it do?

The ALPAC Report (1966), published by the U.S. National Research Council’s Automatic Language Processing Advisory Committee, concluded that machine translation was slower, less accurate, and twice as expensive as human translation, halting U.S. government MT funding for nearly a decade.

What is statistical machine translation?

Statistical Machine Translation (SMT) is a machine translation approach that uses probabilistic models trained on large bilingual text corpora to generate translations, replacing rule-based grammar systems; it was the dominant MT paradigm from the early 1990s until approximately 2016.

What is statistical machine translation?

Statistical Machine Translation (SMT) is a machine translation approach that uses probabilistic models trained on large bilingual text corpora to generate translations, replacing rule-based grammar systems; it was the dominant MT paradigm from the early 1990s until approximately 2016.

What machine translation systems are used today?

4 machine translation systems dominate commercial use in 2024: Google Translate (133 languages, 100B words/day), DeepL (33 languages, top-rated for European language pairs), Microsoft Translator (130 languages), and Amazon Translate (75 languages, AWS enterprise focus).

What is BLEU score in machine translation?

BLEU (Bilingual Evaluation Understudy) is the primary automated metric for evaluating machine translation quality, introduced by Papineni et al. at IBM Research in 2002; it measures the overlap between machine-generated translations and human reference translations on a scale of 0-100.

Does Circle Translations offer machine translation post-editing services?

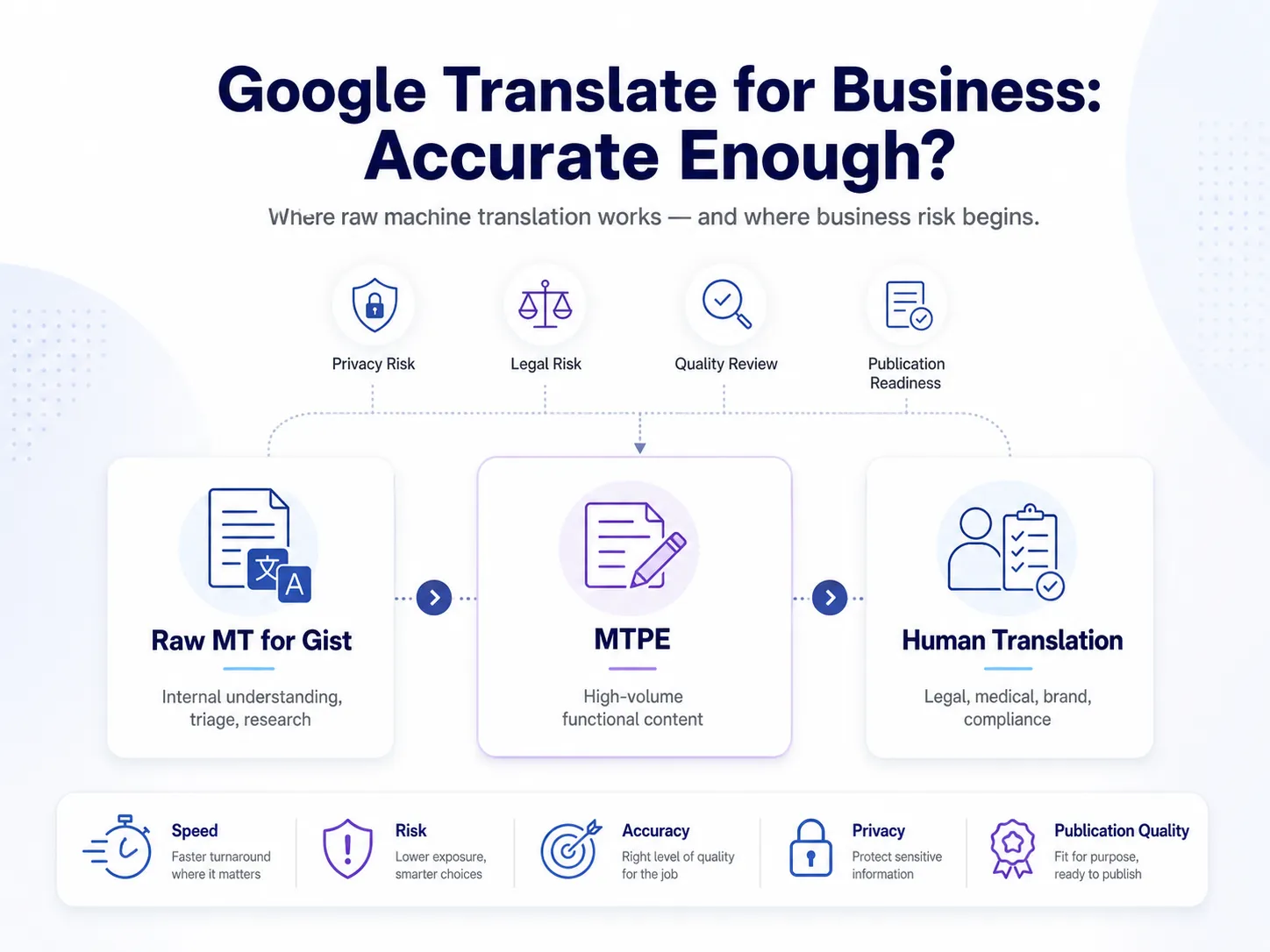

Circle Translations provides Machine Translation Post Editing (MTPE) services, in which qualified linguists review and refine machine-translated output to meet ISO 17100 quality standards — combining MT speed with human accuracy across 73+ languages.

Subtitles

Professional and Accurate Subtitle Services for your Videos.

- Video subtitles specifically tailor-made for improving accessibility.

- Using highly experienced subtitlers with years of industry experience.

- Professionally written and expertly timed.

Translation

We help the world’s top companies translate their content in over 73 languages!

- We localize content for internet websites, games, travel, cryptocurrencies, and more

- Expand your global audience by adding different languages.

- We work only with qualified translators and experienced content creators

Audio translation

Ensuring full accessibility for Blind and visual impaired audiences.

- Visual descriptive events as they occur in the video.

- Working with top audio describers to perfectly describe what is happening on-screen

- Professional sound recording.