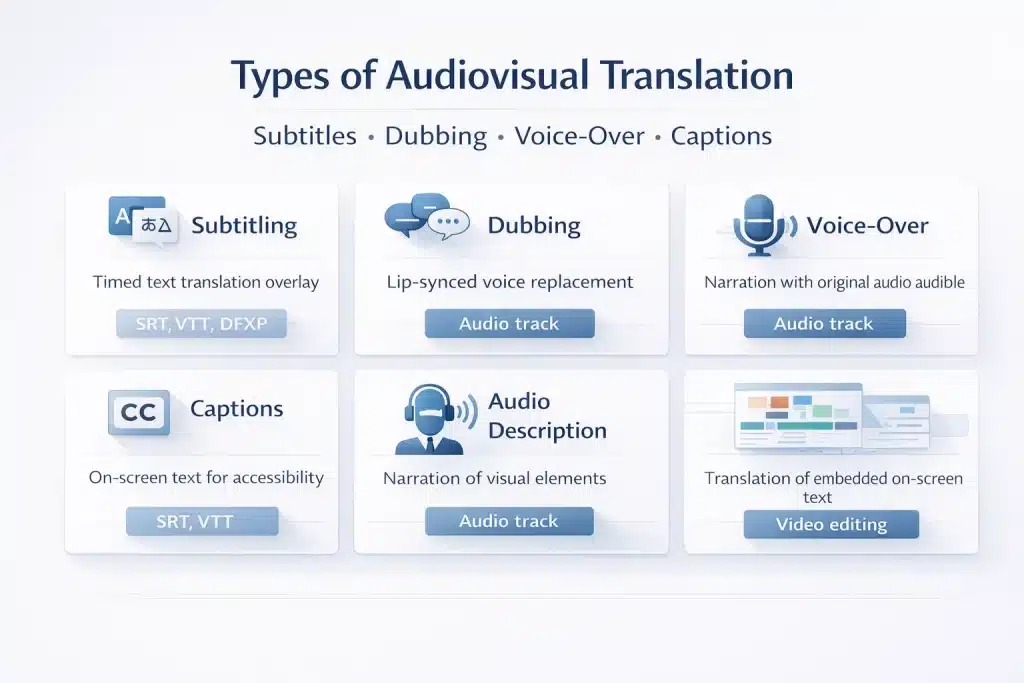

Audiovisual translation (AVT) is the discipline of transferring spoken or written language in audio and video content into another language. It includes subtitling, dubbing, voice over, closed captions, audio description, and screen text translation. Each method is applied differently depending on the content type, audience expectations, budget, and cultural preferences of the target market.

Most organisations commissioning video localization face the same initial decision: subtitling, dubbing, or voice-over. Without a clear framework, teams often choose based on cost alone or simply replicate the method used in the source market. This guide defines the main types of audiovisual translation, explains when each method should be used, provides 2026 cost benchmarks, and outlines the typical workflow used to localise video content for global audiences.

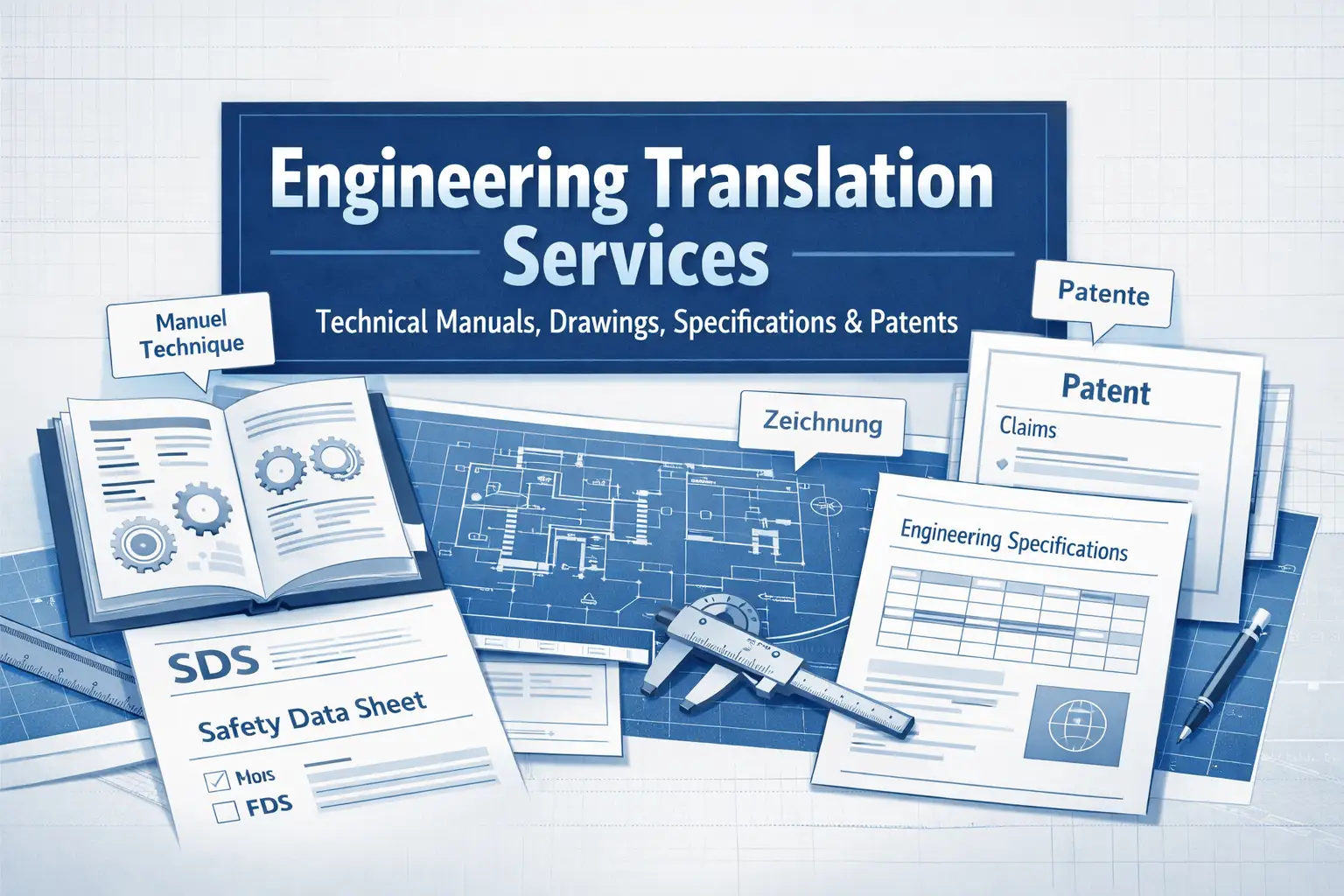

Types of Audiovisual Translation: The Complete Taxonomy from Subtitling to Audio Description

Audiovisual translation is not a single service. It includes several distinct methods, each with different technical requirements, cost structures, and use cases. When these methods are confused in a brief or RFQ, projects are often misquoted and deliverables do not match expectations.

The following overview defines the main types of audiovisual translation and where each is typically used. As Jorge Díaz Cintas and Aline Remael (2021) explain in Subtitling: Concepts and Practices, “Audiovisual translation refers to the transfer of multimodal audiovisual texts into another language and culture,” encompassing different techniques such as subtitling, dubbing, and voice-over depending on the production requirements.

The following overview defines the main types of audiovisual translation and where each is typically used.

| Type | Definition | Format | Typical use cases |

| Interlingual subtitles | Text overlay translates the source language dialogue into a target language | SRT, VTT, DFXP | Film, TV, streaming, corporate video, eLearning |

| Intralingual subtitles | Text overlay of the same language dialogue | SRT, VTT | Accessibility, broadcast, searchable transcripts |

| SDH (Subtitles for the Deaf and Hard of Hearing) | Subtitles including dialogue and non-speech audio cues | SRT, VTT | Accessibility compliance, WCAG, Section 508 |

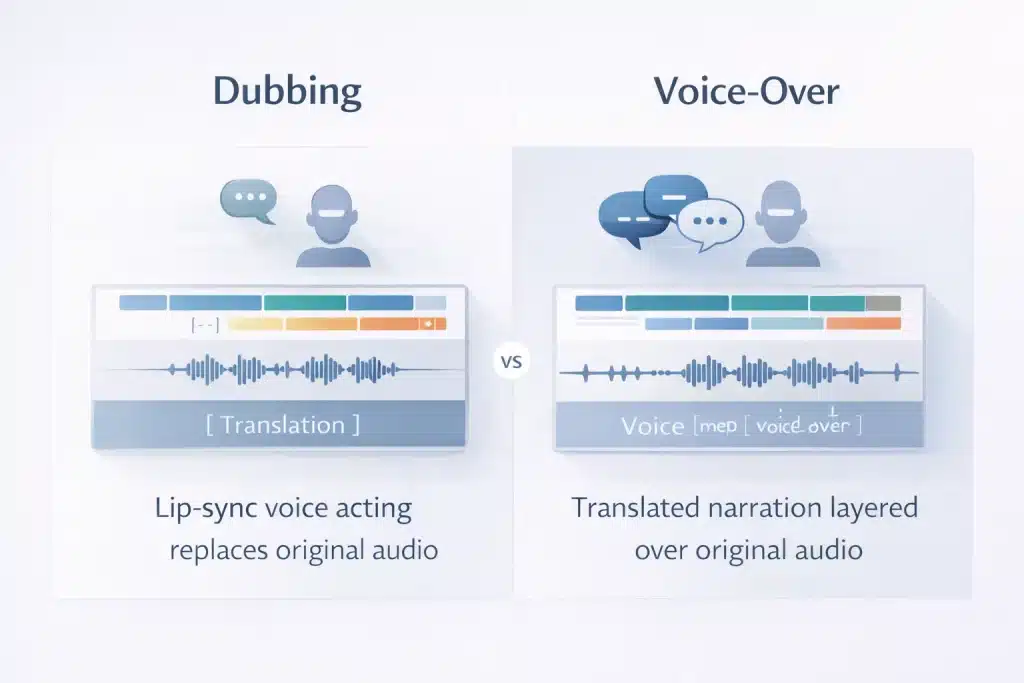

| Dubbing (lip sync) | Replacement of the original audio with lip-synced voice acting | Audio track | Cinema, OTT, children’s content |

| Voice over | Translated narration layered over original audio | Audio track | Documentary, eLearning, corporate video |

| Revoicing / loose dubbing | New translated audio without strict lip synchronisation | Audio track | News, documentary, corporate video |

| Audio description | Narrated descriptions of visual elements for visually impaired audiences | Audio track | Accessibility compliance, broadcast |

| On-screen text translation | Translation of text embedded in the video frame | Video editing / DTP | Motion graphics, titles, product demos |

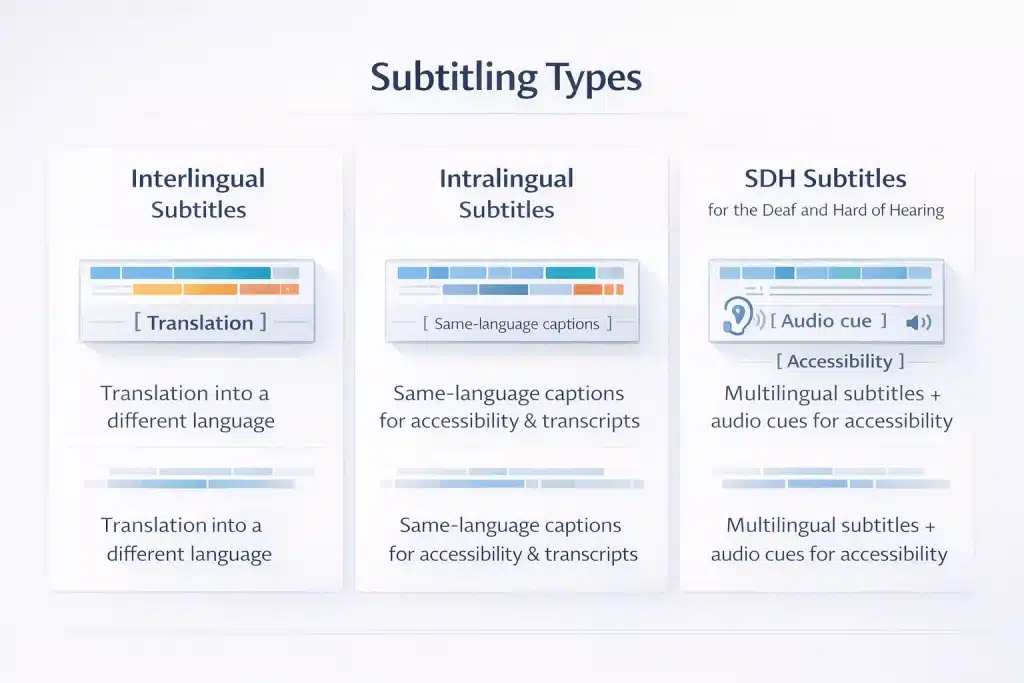

Subtitling: Interlingual, Intralingual, and SDH

Subtitling is the most widely used form of audiovisual translation. It converts spoken dialogue into timed text displayed on screen. Three formats are commonly used.

Interlingual subtitles translate dialogue from the source language into a different target language. They are the standard approach for multilingual video content such as streaming video, corporate presentations, marketing videos, and eLearning courses.

Because viewers must read while watching, subtitles are usually condensed to meet reading speed limits of about 12 to 20 characters per second and around 35 to 42 characters per line.

Intralingual subtitles display dialogue in the same language as the audio. These are often used for accessibility, viewers watching without sound, or searchable transcripts for video platforms. Although they look similar to subtitles, they are technically closer to captioning.

SDH subtitles (Subtitles for the Deaf and Hard of Hearing) include dialogue plus important audio information such as speaker identification and sound cues like [door slams] or [music playing]. SDH is required for accessibility standards such as WCAG 2.1 and Section 508 for US public sector content.

B2B compliance note: US federal government and publicly funded education content must meet ADA and Section 508 standards, which require SDH captions rather than standard subtitles.

Dubbing: Lip Sync Dubbing, Revoicing, and When Full Replacement Is Worth the Cost

Dubbing replaces the original audio track with a new recording performed by voice actors in the target language. It is the most immersive form of audiovisual translation and also the most expensive.

Lip sync dubbing is used for entertainment content such as films and television. Voice actors must match the timing and mouth movements of the on-screen characters. This requires adapted translation, professional casting, studio recording, audio mixing, and detailed synchronisation review.

Revoicing or loose dubbing records new dialogue without strict lip synchronisation. The original audio may remain faintly audible underneath the translated narration. This approach is common in documentary, news reporting, and corporate video, where precise lip sync is not essential.

| Region | Strong dubbing preference | Strong subtitling preference | Notes |

| Latin America | ✓ | Entertainment and eLearning | |

| Spain | ✓ | Long dubbing tradition | |

| France | ✓ | Mainstream cinema | |

| Germany, Austria, Switzerland | ✓ | Standard for TV and film | |

| Italy | ✓ | Dominant in broadcast | |

| UK, Netherlands, Nordics | ✓ | Subtitles are common except in children’s content | |

| Middle East and North Africa | ✓ | Voice-over common for corporate video | |

| Japan | Mixed | Mixed | Both methods are widely used |

| India | ✓ | Regional dubbing markets |

If a video is distributed in markets such as Latin America, Germany, France, or the Middle East, dubbing is often expected by mainstream audiences.

Voice Over: Off-Screen Narration and Corporate Video localization

Voice-over translation refers to translated narration layered over the original audio while the source track remains audible at low volume. This differs from dubbing, where the original audio is completely replaced.

Voice-over is widely used in several types of video.

- Corporate training and eLearning courses with a single narrator

- Documentary or interview-based content

- Product demonstrations and explainer videos

- News reporting and journalism

- Recorded conference or event presentations

The key advantage of voice-overis that the original speaker’s voice remains audible. This can preserve authenticity in interviews, executive statements, or expert commentary while still delivering the translated message.

Voice-over may also use different styles depending on the content. Documentary narration is typically neutral and informative, while character voice-overcan be more expressive and closer to dubbing. AI-generated voice-overs are increasingly used in corporate and eLearning content where speed and cost efficiency are priorities.

Closed Captions, Open Captions, and Subtitles

Subtitles and captions are often confused, but they serve different purposes.

Closed captions (CC) are subtitle files that viewers can turn on or off. They include dialogue and important sound cues and are widely used on platforms such as YouTube, Vimeo, and LMS systems.

Open captions are permanently embedded into the video and cannot be disabled. They are often used on social media platforms where videos autoplay without sound.

Subtitles usually assume the viewer can hear the audio and only needs a translation of the dialogue. Captions assume the viewer cannot hear the audio and therefore include sound descriptions and speaker identification.

| Standard | Requirement | Format | Typical users |

| ADA Title II (US) | Captions for public video | SRT, VTT, or burned in | Public sector organisations |

| Section 508 (US) | Captions plus audio description | SRT or VTT | Federal agencies |

| WCAG 2.1 AA | Captions for prerecorded video | SRT or VTT | Organisations targeting accessibility compliance |

| Equality Act 2010 (UK) | Accessible media is where reasonable | SRT or VTT | Public-facing organisations |

When ordering translation services, it is important to specify whether the requirement is subtitles for translation or captions for accessibility compliance.

Audio Description and Screen Text Translation

Two audiovisual translation tasks are often overlooked in project briefs.

Audio description (AD) is a narrated track describing visual elements such as actions, scene changes, gestures, or text appearing on screen. It allows blind or visually impaired viewers to understand visual information that is not conveyed in the dialogue.

Audio description is required for accessibility compliance under standards such as WCAG 2.1 and ADA guidelines.

Screen text translation refers to text embedded within the video frame. This includes titles, motion graphics, captions within animations, and interface text in product demonstrations. Because this text is part of the video image rather than an overlay subtitle file, it must be extracted, translated, and replaced using video editing software.

If screen text is not translated, the video appears only partially localised and may confuse viewers in the target market.

Subtitling vs Dubbing vs Voice-Over: Decision Framework for B2B Content Teams

Choosing the right audiovisual translation method depends on several factors: content type, cultural expectations in the target market, budget, delivery timeline, and the viewing experience you want to create. No single method is universally correct.

The following framework helps content teams evaluate the trade-offs between subtitling, voice-over, and dubbing.

| Criteria | Subtitling | Voice Over | Dubbing |

| Cost | Lowest (€5–€25 per minute) | Medium (€20–€75 per minute) | Highest (€35–€150+ per minute) |

| Turnaround | Fastest (1–3 days per language) | Moderate (3–7 days) | Slowest (5–15+ days) |

| Immersive experience | Low, the viewer reads subtitles | Medium, translated speech, but original audio audible | High, fully localised audio |

| Preserves original performance | Yes, original voices heard | Partially, original audio remains underneath | No, original audio replaced |

| Audience literacy requirement | Higher reading required | None | None |

| Best for | Film, TV, streaming, eLearning, documentaries | Corporate training, eLearning, documentary | Entertainment, OTT, children’s content |

| Cultural preference fit | Northern and Central Europe, Nordics, APAC | Global corporate content | LATAM, MENA, Spain, France, Germany, Italy, India |

When to Choose Subtitling: Streaming, Budget Constraints, and Authenticity

Subtitling is often the most efficient option for international video distribution. It works particularly well for streaming content targeting European or Asia Pacific markets where subtitles are widely accepted. It is also ideal for documentaries, interviews, and testimonial videos where the original speaker’s voice carries credibility and context.

Subtitling is typically the right choice when budgets are limited, when fast turnaround is required, or when a project involves many languages delivered simultaneously.

For example, subtitling a 60-minute training course into several languages can be completed in days, while dubbing the same content could take weeks.

Most video platforms, such as YouTube, Vimeo, and learning management systems, also require subtitle files for accessibility or captioning support. This makes subtitles a practical baseline for global content distribution.

Subtitling may be less effective for children’s content or for markets with strong dubbing expectations, such as Latin America, Germany, France, and the Middle East.

When to Choose Dubbing: Regional Preferences and Full localization

Dubbing replaces the original audio with voice acting in the target language. It delivers the most immersive experience because viewers hear the dialogue in their own language without needing to read.

This approach is widely expected in many markets, including Latin America, Spain, France, Germany, Italy, the Middle East, and India. In these regions, subtitled content often performs poorly in mass market entertainment or consumer marketing campaigns.

Dubbing is also essential for children’s programming, where viewers cannot reliably read subtitles while watching. High production value marketing campaigns, cinema releases, and OTT streaming content also benefit from dubbing because the fully localised audio signals production quality.

However, dubbing requires more time and budget due to voice casting, studio recording, audio mixing, and synchronisation. It is usually not suitable for tight deadlines or for videos where the original speaker’s voice carries authority, such as executive announcements or expert interviews.

When to Choose Voice Over: Corporate Training and Documentary Content

voice-oversits between subtitling and dubbing in both cost and production complexity. In this method, the translated narration is recorded and layered over the original audio, which remains audible underneath at low volume.

voice-overis widely used for corporate training videos, eLearning courses, documentary content, and recorded presentations. It works particularly well when the video has a single narrator or when on-screen speakers do not require precise lip synchronisation.

Because the original audio remains audible, voice-overpreserves the authenticity of interviews or expert commentary while still making the content understandable to new audiences. This balance makes it the most common approach for global corporate communications.

Many organisations also adopt a hybrid approach. Subtitles may be used as the default format across multiple markets, while voice-overis added for regions where spoken localization improves engagement.

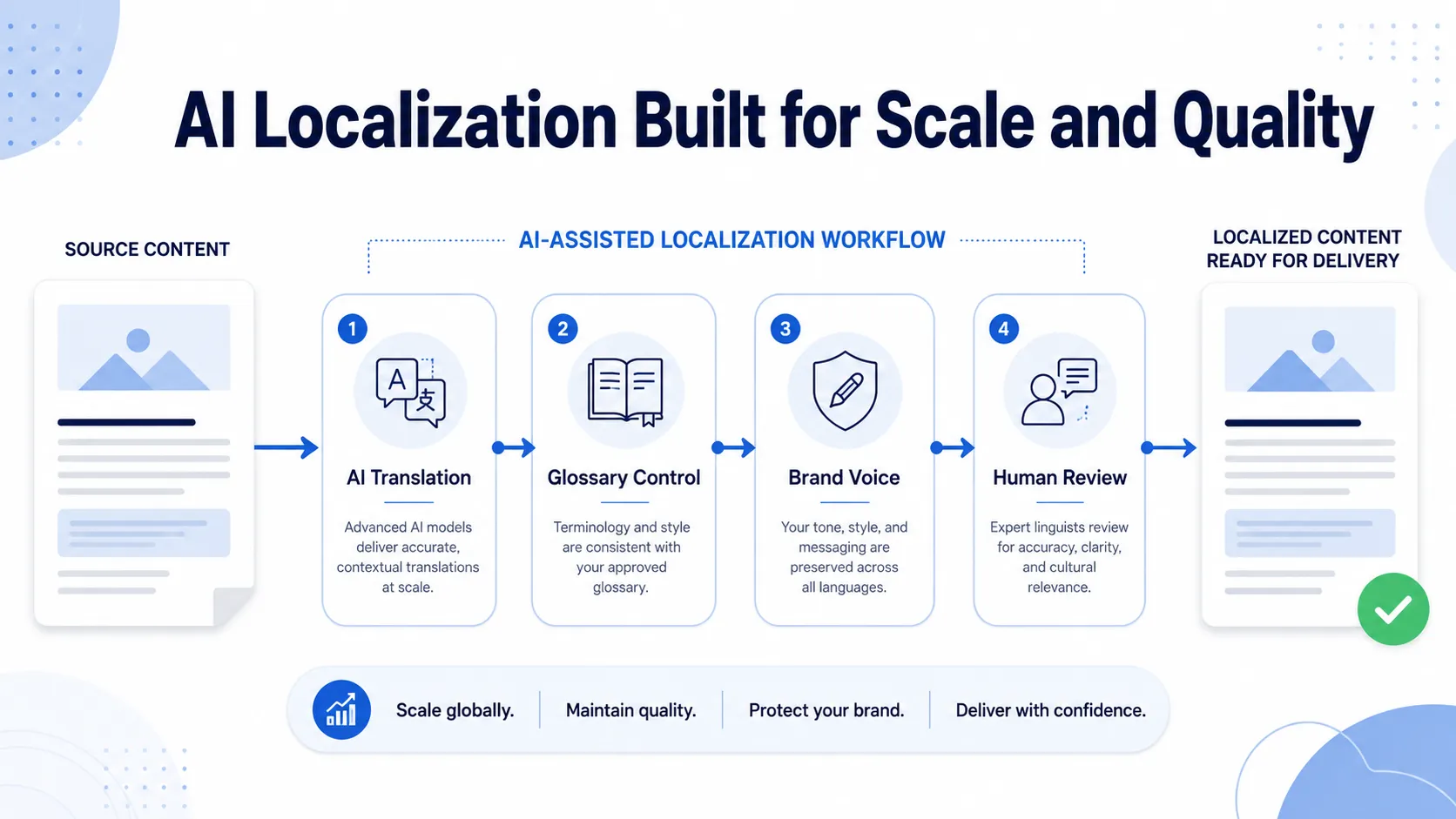

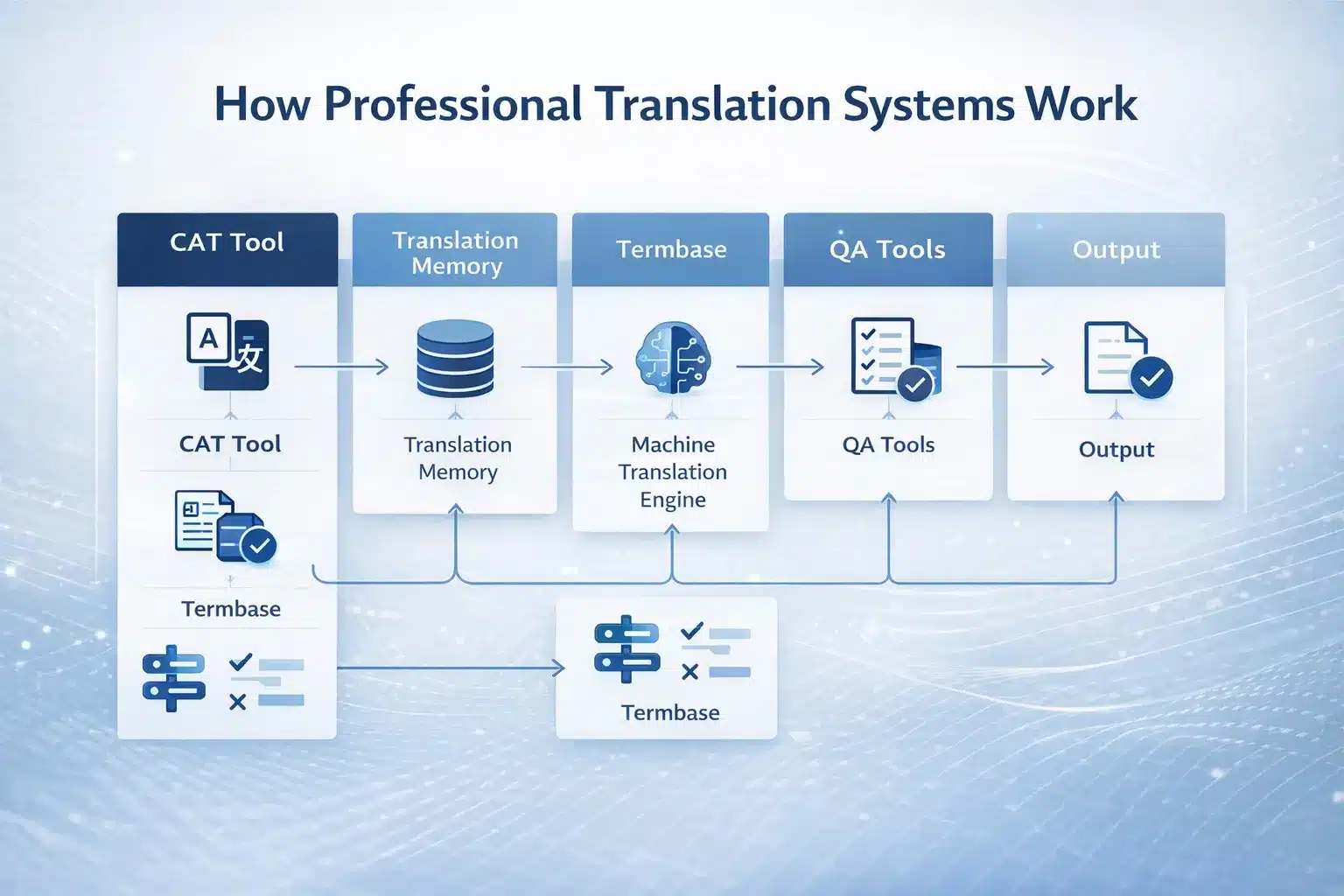

Audiovisual Translation Workflow: From Source Video to Localised Deliverable

Every audiovisual translation project follows a structured workflow regardless of whether the method is subtitling, voice-over, or dubbing.

Understanding this process helps avoid the most common causes of delays and cost overruns, which usually occur when key inputs such as transcripts, final video files, or delivery specifications are missing at project start.

Step 1: Transcription

The first step in any audiovisual translation project is creating an accurate source transcript. If a script or transcript is not provided by the client, the translation team must produce one from the audio. This may involve automatic speech recognition followed by human review, or full manual transcription for complex audio, multiple speakers, or strong accents.

Providing a verified source script significantly reduces both cost and the risk of transcription errors.

Information that should be provided at the start of an AV translation project includes:

• Final locked video file. Rough cuts should not be used because any later edit changes invalidate existing time codes

• Source language transcript or script if available

• Previous translations or terminology references for consistency

• Speaker list or character descriptions for dubbing and voice-overcasting

• Target delivery formats and platform requirements

• Accessibility or compliance requirements such as WCAG, ADA, or Section 508

Step 2: Spotting and time coding

In subtitle production, the next step is spotting. A specialist spotter defines when each subtitle appears and disappears on screen. This creates the time-coded structure that translators will work within.

Spotting requires careful attention to shot changes, reading speed limits, natural sentence breaks, and platform formatting guidelines. A well-prepared spotting file ensures that subtitles remain readable and properly synchronised with the video.

For multilingual projects, a single spotting template can be reused across all languages. This dramatically reduces cost and ensures that all language versions remain synchronised with the same timing structure.

Step 3: Translation and adaptation

Translation for audiovisual media requires adaptation rather than literal conversion. Subtitles must often be shortened to meet reading speed limits, usually around 70 to 80 percent of the original spoken word count. Dubbing scripts must be adapted to match the rhythm and timing of the on-screen speech. voice-overscripts must read naturally when spoken aloud.

Cultural references, humour, brand terminology, and regulatory language may also require transcreation to ensure the translated message works in the target market.

Step 4: Recording for dubbing and voice-over

For dubbing and voice-overprojects, translated scripts are recorded in a professional studio environment.

Typical recording stages include voice casting based on language, dialect, gender, and tone; studio recording sessions with a director and sound engineer; synchronisation review for dubbing; and pacing checks to ensure the recorded audio fits the video timeline.

Some corporate and eLearning projects may use AI generated voice-overto reduce cost and turnaround time, although human review is still required to ensure quality and natural delivery.

Step 5: Quality assurance and delivery

The final stage is quality assurance and delivery preparation.

For subtitles, this includes linguistic review, timing verification, reading speed checks, and platform compliance validation.

For dubbing and voice-over, quality control includes audio synchronisation review, voice performance evaluation, and audio level checks against broadcast or platform standards.

Typical delivery formats include:

- Subtitles: SRT, VTT, DFXP, WebVTT, or burned-in captions

- Audio tracks for dubbing or voice over: mixed audio with dialogue, music, and effects, or dialogue-only stems for post-production mixing

- Final video deliverables: rendered video files such as MP4 or MOV, or separate audio stems delivered for integration into the final video edit

Subtitling Best Practices: Technical Standards, Reading Speed, and Platform Requirements

Subtitling quality is determined not only by translation accuracy but also by technical formatting standards. Subtitles that exceed reading speed limits, use poor line breaks, or display at incorrect timing create a poor viewing experience.

The following practices reflect current professional standards used across broadcast, streaming platforms, and corporate video environments.

Reading Speed, Character Limits, and Line Breaks: The Core Subtitle Formatting Rules

Reading speed is the central constraint in subtitle production and one of the most common issues in low-quality subtitles. Viewers must be able to read each subtitle comfortably within the time it appears on screen. If the text is too dense or displayed too briefly, viewers either miss the message or stop watching.

| Standard | Reading speed | Max characters per line | Max lines | Minimum display time | Maximum display time |

| Netflix standard | 17 characters per second | 42 | 2 | 0.833 sec | 7 sec |

| BBC guidelines | 180 words per minute | 37 | 2 | 1.0 sec | 5.5 sec (news), 8 sec (drama) |

| Corporate / web video | 12–15 characters per second | 40 | 2 | 1.0 sec | 6 sec |

| SDH accessibility captions | 12 characters per second | 37–40 | 3 | 1.0 sec | 7 sec |

| Cinema subtitles | 17 characters per second | 40 | 2 | 0.5 sec | 7 sec |

Correct line breaking is also essential for readability. Subtitles should break at natural grammatical points, such as after conjunctions or before a new clause. Lines should be visually balanced where possible, and proper nouns or fixed expressions should never be split across lines.

Another critical production factor is frame rate. Subtitles must be timed against the final locked video file. If a video is later re-exported at a different frame rate, all subtitle time codes become invalid and must be rebuilt.

This is a production workflow issue rather than a translation error, which is why subtitle timing should only begin once the final edit is confirmed.

Multi-Language Subtitle Efficiency: The Spotting Template Method

For projects requiring subtitles in multiple languages, the spotting template method significantly reduces cost and improves timing consistency across language versions.

In this workflow, a professional spotter creates a single-time-coded subtitle template using the source language. This template defines when each subtitle appears and disappears on screen.

The template is then distributed to translators working in different target languages. Each translator fills the existing time slots with the translated text while respecting character limits and reading speed requirements.

Because the timing structure is created only once, the project avoids repeating the spotting stage for each language. This method becomes particularly efficient for large multilingual projects where ten or more languages are produced simultaneously.

Languages with very different sentence structures, such as Japanese or Arabic, may still require minor timing adjustments. However, the spotting template still eliminates most duplicate work.

Reusing Translated Subtitles Across Platforms and Repurposing Assets

Translated subtitles should be treated as reusable content assets rather than one-time deliverables. When managed properly, subtitle files can support multiple distribution channels and content formats.

A single SRT subtitle file can be reused across video platforms such as YouTube, Vimeo, LinkedIn video, learning management systems, or embedded website players. Subtitle files can also be converted into full transcripts, which are valuable for accessibility compliance and for improving multilingual search visibility.

Subtitle translations can also serve as a source script for voice-overproduction if spoken narration is added later. In addition, standard subtitles can be upgraded to SDH captions by adding speaker identification and non-speech audio cues.

For organisations producing regular video content, maintaining a translation memory for subtitles helps ensure consistent terminology and reduces translation cost over time.

In ongoing video programmes, translation memory reuse can reduce per-video translation cost by approximately 15 to 40 percent over the course of a year.

Audiovisual Translation Pricing: Cost per Minute for Subtitling, Dubbing, Voice Over, and Captions

Audiovisual translation is usually priced per minute of video rather than per word. Each method has its own cost structure depending on the type of work involved.

Subtitling focuses on translation and timing, while dubbing and voice-overinclude voice talent, recording, and audio engineering.

Understanding these components helps organisations plan realistic localization budgets.

| Service | Pricing model | Base range | Premium range | Notes |

| Subtitle translation (1 language) | Per minute of video | €5–€12 / min | €15–€25 / min | Higher for rare language pairs, technical content, rush delivery |

| SDH captions | Per minute | €8–€18 / min | €22–€35 / min | Additional work to include sound cues and speaker identification |

| Intralingual captions | Per minute | €3–€8 / min | €10–€15 / min | Often generated via ASR, then human reviewed |

| Dubbing (lip sync) | Per finished minute | €35–€80 / min | €100–€150+ / min | Multiple characters and complex lip sync increase cost |

| Voice over | Per finished minute | €20–€50 / min | €65–€100 / min | A single narrator is the most common configuration |

| AI voice-overor AI dubbing | Per minute | €5–€20 / min | €25–€50 / min | Requires human review; not suitable for regulated content |

| Spotting/time coding | Per minute of video | €0.50–€2 / min | €3–€5 / min | One-time cost that can be reused across languages |

| On-screen text translation | Per hour | €40–€80 / hour | €90–€120 / hour | Requires access to After Effects or Premiere source files |

| Audio description | Per minute | €15–€35 / min | €40–€60 / min | Includes writing and recording narration |

What Drives AV Translation Costs Up: Language Pair, Speaker Count, and Sync Complexity

Several factors can increase the final cost of audiovisual translation beyond the base rate. These typically relate to language complexity, the number of speakers involved, and the technical difficulty of synchronisation.

| Cost driver | Impact on subtitling | Impact on dubbing/ voice-over | Mitigation |

| Rare language pairs | +20–100 percent | +30–100 percent on talent fees | Budget rare languages separately |

| Speaker or character count | Minimal impact | +€30–€80 per minute for additional voices | Consolidate characters where possible |

| Lip sync complexity | Not applicable | +25–50 percent for complex sequences | Use loose dubbing or voice-overwhere possible |

| Rush delivery | +25–60 percent | +35–75 percent | Build translation into the production schedule |

| Missing source files for on-screen text | Not applicable | +€50–€120 per hour to recreate graphics | Retain original video project files |

| Studio requirements | Not applicable | +€100–€300 per day for studio bookings | Record multiple languages in one session |

Buyer tip: Request itemised quotes that separate translation, spotting, recording, mixing, and quality assurance. Bundled per-minute pricing makes it difficult to compare vendors or identify the main cost drivers.

In-House vs. Vendor: When to Outsource AV Translation

Organisations producing frequent video content often consider whether to build internal translation capability or outsource to a specialised vendor. The decision depends mainly on video volume, language count, and technical complexity.

| Scenario | In-house recommended | Vendor recommended |

| Fewer than 3 videos per month, 1–2 languages | ✓ Per project vendor is more efficient | |

| 3–10 videos per month, 3–5 languages | Partial internal project management | ✓ Vendor with dedicated account manager |

| More than 10 videos per month, 5+ languages | In-house localization manager | ✓ Vendor with workflow automation and TM |

| Regulated content, such as medical or legal | ✓ Specialist vendor required | |

| Dubbing or studio recording | ✓ Studio investment rarely justified internally |

Typical internal costs for an organisation producing around 10 videos per month in five languages can include:

- FTE localization specialist: €60,000–€90,000 per year

- Translation and subtitle software licences: €4,500–€13,000 per year

- Studio rental and recording costs: €25,000–€70,000 per year

This can exceed €90,000–€170,000 annually.

By comparison, outsourcing the same workload to a specialised vendor may cost roughly €25,000–€35,000 per year, depending on video length and language count, making vendor partnerships significantly more cost-efficient for most organisations.

How to Build an AV Translation Brief and Get Accurate Quotes

Audiovisual translation quotes vary widely because the inputs that determine cost are often missing from the initial project brief. Providing a structured brief allows vendors to quote accurately and makes different proposals easier to compare.

Video information to include:

✓ Total finished video duration

✓ Source language and dialect

✓ Target languages and dialect variants (for example, Latin American Spanish)

✓ Number of speakers or characters for dubbing or voice-over

✓ Subject matter or technical domain

✓ Final video file available at project start

✓ Source transcript or script if available

Deliverable specifications:

✓ Method required: subtitling, dubbing, voice over, captions, or combination

✓ Subtitle format: SRT, VTT, burned-in captions, or platform-specific format

✓ Audio delivery requirements such as mixed audio or dialogue-only stems

✓ On-screen text translation included or excluded

✓ Accessibility requirement, such as SDH captions or audio description

Quality and timeline:

✓ Quality level such as translation only, translation and editing, or full TEP workflow

✓ Required delivery date and whether rush production applies

✓ Target platform such as YouTube, streaming platform, or learning management system

Providing these details allows vendors to generate accurate and comparable quotes.

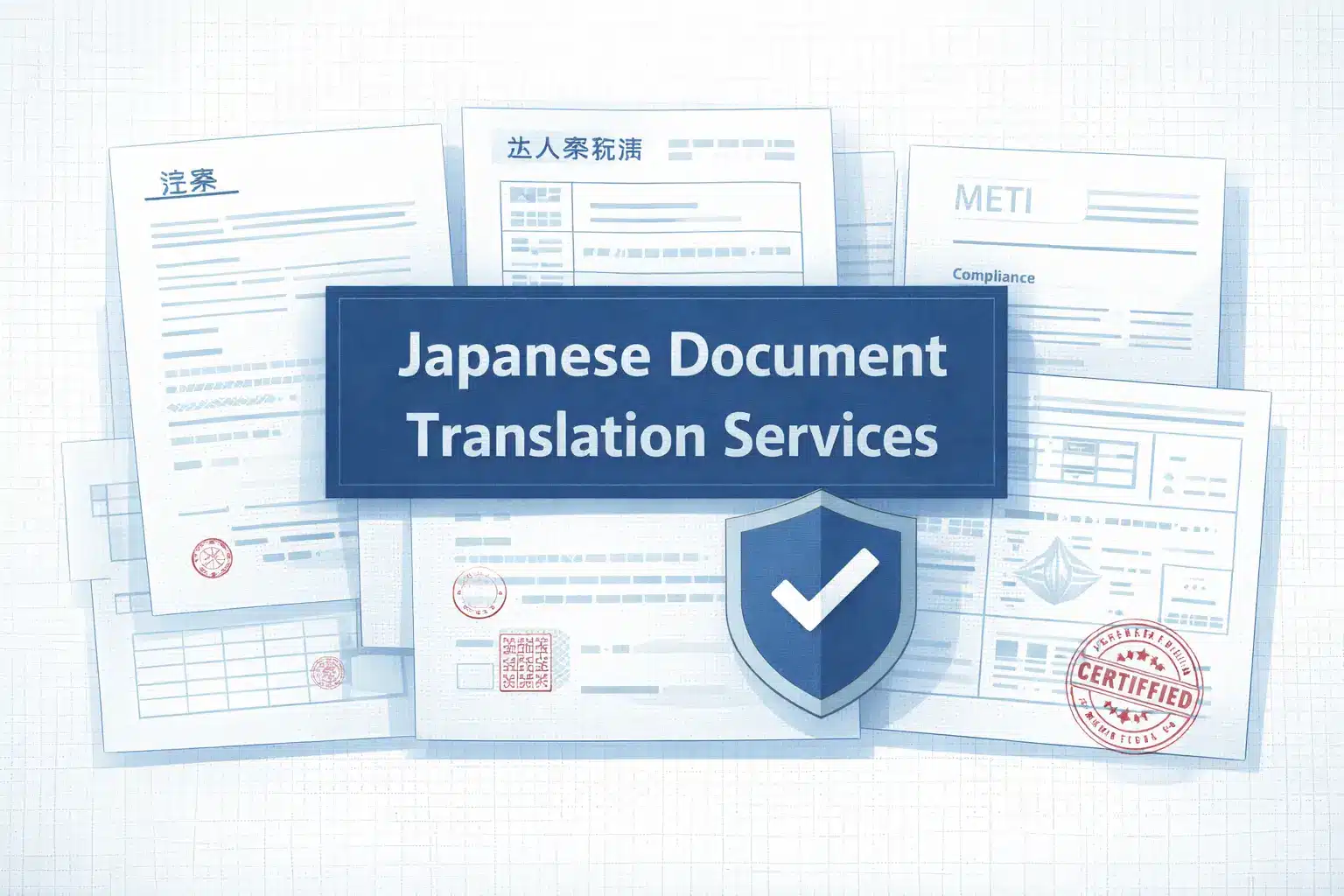

Commission Your Audiovisual Translation: Subtitling, Dubbing, Voice Over, Captions, or Full Multimedia localization

Circle Translations delivers the full audiovisual translation stack, including subtitling, dubbing, voice over, closed captions, SDH, audio description, and on-screen text translation in more than 120 languages.

Every AV project includes:

✓ Professional human linguists for all translation and adaptation work. No raw machine translation output

✓ Spotting template workflow for multi-language delivery that reduces per-language cost

✓ Subtitle delivery in SRT, VTT, DFXP, or burned-in formats according to platform requirements

✓ Human voice talent with specified dialect, tone, and subject matter familiarity

✓ QA review covering reading speed standards, timing accuracy, and platform compatibility

✓ WCAG, ADA, and Section 508 accessibility compliance when required

✓ NDA and GDPR DPA signed before any file sharing

Submit your video scope for a free, itemised audio-visual translation quote.

Get a Free AV Translation Quote

Frequently Asked Questions – Audiovisual Translation

What is audiovisual translation, and what does it include?

Audiovisual translation (AVT) is the transfer of language in video and audio content from one language to another. It includes subtitling, dubbing, voice over, closed captions, SDH captions, audio description, and translation of on-screen text such as titles and graphics.

What is the difference between dubbing and subtitling?

Dubbing replaces the original audio with voice acting in the target language. Subtitling keeps the original audio and displays translated dialogue as text on screen. Dubbing provides a fully localised audio experience, while subtitles require viewers to read while watching.

How much does it cost to subtitle a video professionally?

Professional subtitle translation usually costs €5 to €12 per minute for common language pairs. Specialised content or rare languages typically cost €15 to €25 per minute. Additional costs may include subtitle spotting or on-screen text localization.

Can AI translate subtitles and dubbing reliably?

AI can generate transcripts and draft translations, but human review is required for accuracy, reading speed, and timing. AI voices are increasingly used for corporate and training content, while human voice actors remain standard for entertainment and marketing videos.

What file format should I request for translated subtitles?

SRT is the most widely supported subtitle format and works with most video platforms and players. VTT is commonly used for web video. Burned-in subtitles are embedded directly in the video and cannot be turned off.

What languages are most commonly requested for audiovisual translation?

The most requested languages include Spanish, French, German, Brazilian Portuguese, Simplified Chinese, Japanese, Arabic, Italian, Korean, and Dutch. Demand reflects global distribution markets and corporate training needs.

Do translated subtitles improve video SEO?

Yes. Subtitle files can be indexed by search engines on platforms such as YouTube. Translated subtitles improve discoverability in target languages and increase viewer engagement in international markets.

What is the typical turnaround time for subtitle translation or dubbing?

Subtitle translation for short videos typically takes one to three business days per language. Dubbing usually takes five to fifteen business days because it involves voice casting, recording, and audio mixing.

What does an audiovisual translator do?

An audiovisual translator adapts dialogue and on-screen text for video while respecting timing and platform constraints. This includes condensing text for subtitles, adapting scripts for dubbing, and ensuring cultural and linguistic accuracy.